Research Finder

Find by Keyword

The Data Architecture Divide: Enterprise AI Approaches a Storage Inflection Point

HyperFRAME Research Lens data shows that limited data infrastructure readiness, not model capability, is the primary constraint on enterprise AI scaling.

03/11/2026

Key Highlights

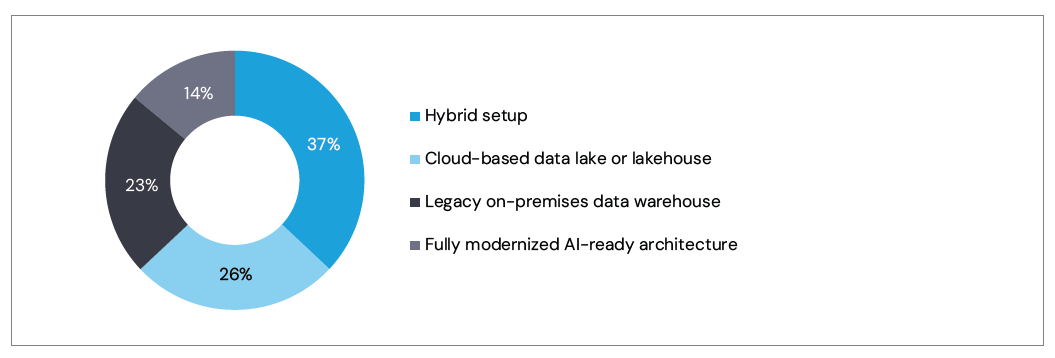

- Only 14% of organizations report a fully AI-ready data architecture, indicating limited readiness for sustained AI workloads.

- 37% operate hybrid data architectures, reflecting a transitional state between legacy systems and modern data platforms.

- 23% remain dependent on legacy on-premises data warehouses designed for reporting and batch analytics.

- 50% cite scalability as the primary barrier to expanding AI deployments.

- The findings suggest that data architecture maturity and storage infrastructure are emerging as decisive factors in determining how far enterprise AI initiatives can scale.

The News

Following our initial analysis of enterprise AI readiness, HyperFRAME Research looks at additional findings from the HyperFRAME Research Lens: State of the Enterprise AI Stack 1H 2026, a global survey of 544 enterprise decision-makers examining how organizations are progressing from AI experimentation toward production deployment. The data highlights a widening divide between enterprise AI ambition and the architectural readiness required to support it. While organizations broadly recognize the strategic importance of AI, relatively few have modernized the data foundations needed to sustain large-scale training, inference, and governance workflows. The full report and survey findings are available on the HyperFRAME Research Lens page.

Analyst Take

For most of its history, enterprise storage has been defined by familiar tradeoffs. Organizations balanced capacity against performance, performance against security, and all of it against budget and operational constraints. Those tensions remain. What has changed is the strategic weight carried by data itself.

Data is no longer simply an asset to be stored and protected. It has become the operational substrate of digital enterprises and the foundational input behind AI training and inference. As AI workloads move from experimentation into production, the expectations placed on infrastructure, particularly storage, begin to change.

The Lens data provides important context. Only 14% of organizations report a fully AI-ready data architecture, while 37% continue to operate hybrid data environments and 23% remain anchored to legacy on-premises data warehouses. These conditions help explain why 50% of enterprises identify scalability as the primary barrier to expanding AI deployments.

Graphic: Current state of enterprise core data architectures supporting AI workloads

The Lens results reveal a widening divide across enterprises. Organizations with modern data platforms and scalable storage substrates are beginning to embed AI in revenue-impacting workflows and have greater ability to move, govern, and retrieve data fast enough to support production inference. In practical terms, the competitive advantage in enterprise AI is shifting from model access to data platform readiness.

Enterprises are not progressing through AI maturity in a uniform way. Some organizations remain in structured experimentation. Others have embedded inference into production workflows where latency, accuracy, and auditability directly affect business outcomes. As AI adoption deepens, the underlying data architecture becomes increasingly consequential. Hybrid environments illustrate this transitional stage. 37% of enterprises now operate hybrid data architectures, combining legacy platforms with newer cloud or lakehouse environments. While this approach allows organizations to modernize incrementally, it also introduces integration complexity as data moves between platforms built for different performance and governance models.

Legacy infrastructure remains another important factor. 23% of organizations still rely primarily on legacy on-premises data warehouses, many of which were designed for reporting and batch analytics rather than the continuous data access patterns required by modern AI pipelines. This helps explain why scalability emerges as the most commonly cited barrier. Half of surveyed organizations (50%) identify scalability as the primary obstacle to expanding AI deployments, reinforcing the connection between data architecture maturity and enterprise AI success.

Where Inference Meets Infrastructure

In our view, the inflection point will not arrive as a synchronized market event. It will surface where AI velocity collides with architectural limitation.

Inference workloads behave differently from traditional enterprise applications. They generate continuous, latency-sensitive reads against evolving datasets while indexes, feature stores, and access policies are updated in parallel. Context retrieval and vector search introduce persistent metadata activity that continues long after initial model training, placing constant pressure on storage performance and governance enforcement.

This shift also changes how infrastructure is evaluated by enterprise architects. Historically, storage systems were optimized for durability and capacity efficiency. AI inference environments instead prioritize metadata performance, parallel access, and predictable latency under constant query pressure. These operational characteristics are closer to high-performance data services than traditional storage tiers, which is why infrastructure designed for analytics workloads often struggles to support AI-driven retrieval patterns.

At the same time, the traditional boundary between data platforms and storage infrastructure is beginning to blur. Lakehouse architectures increasingly depend on the performance and metadata capabilities of the underlying storage substrate, while storage platforms are evolving to expose richer data services and metadata capabilities.

Many vendors now position their platforms around this AI-driven future. Strategic positioning alone does not resolve underlying architectural constraints. The inflection point will emerge when enterprises begin allocating capital toward infrastructure that can sustain inference workloads while maintaining governance, resilience, and control.

What Was Announced

The HyperFRAME Research Lens provides an empirical benchmark for measuring enterprise progress toward AI maturity. The study examines how organizations are evolving their AI stacks across several domains, including strategy, data architecture, governance readiness, and deployment practices.

Within the data architecture category, the findings reveal a landscape characterized by transitional infrastructure. Only 14% of enterprises report architectures fully prepared for AI workloads, while 37% operate hybrid environments and 23% remain dependent on legacy data warehouses.

The research also highlights the growing role of modern data patterns such as Retrieval-Augmented Generation (RAG), which 78% of organizations report implementing or planning to deploy within the next year. These architectures introduce new data access patterns that place additional demands on storage performance, metadata management, and data pipeline orchestration.

Model strategy is also evolving. Nearly half of surveyed organizations report adopting hybrid LLM approaches that combine proprietary and open-source models. This multi-model strategy reflects both cost optimization and a desire to maintain architectural flexibility while avoiding dependency on a single vendor ecosystem.

The emerging multi-model strategy further amplifies the importance of architectural flexibility at the data layer. Enterprises are increasingly orchestrating multiple models, retrieval pipelines, and governance policies simultaneously, which multiplies the number of data interactions required during inference. Storage and data platforms must therefore support not only scale but also consistent policy enforcement across these heterogeneous AI workflows.

Security and governance concerns remain significant barriers to broader AI adoption, prompting many organizations to introduce stronger access controls, encryption, and data protection mechanisms as part of their AI deployments.

Looking Ahead

The Lens findings suggest that enterprise AI adoption is entering a phase where infrastructure architecture will increasingly determine outcomes. With only 14% of organizations reporting AI-ready architectures and half identifying scalability as their primary barrier, modernization of the data layer is becoming a prerequisite for sustained AI deployment.

As organizations expand AI deployments, attention will shift from individual technologies toward coordination across multiple infrastructure layers. Persistent data platforms must manage growing volumes of structured and unstructured information. Storage platforms must sustain continuous access patterns driven by training pipelines, vector retrieval, and inference workloads. Context pipelines assemble the data required for reasoning platforms, while control planes enforce governance, policy, and operational consistency.

These layers are deeply interdependent. Data platforms rely on scalable storage substrates. Context pipelines depend on reliable access to curated datasets. Control planes must coordinate policies across hybrid infrastructure spanning on-premises environments, private clouds, and hyperscale platforms. For many enterprises, the challenge will not be deploying any single component of this architecture. It will be integrating these layers into a coherent system capable of sustaining AI workloads at enterprise scale.

When enterprises standardize on platforms that sustain inference performance with embedded control and resilience, and when capital begins to follow that architectural shift, the inflection point will be clear. Until then, AI will continue to accelerate and the systems beneath it will determine how far and how fast that acceleration can extend.

Don Gentile | Analyst-in-Residence -- Storage & Data Resiliency

Don Gentile brings three decades of experience turning complex enterprise technologies into clear, differentiated narratives that drive competitive relevance and market leadership. He has helped shape iconic infrastructure platforms including IBM z16 and z17 mainframes, HPE ProLiant servers, and HPE GreenLake — guiding strategies that connect technology innovation with customer needs and fast-moving market dynamics.

His current focus spans flash storage, storage area networking, hyperconverged infrastructure (HCI), software-defined storage (SDS), hybrid cloud storage, Ceph/open source, cyber resiliency, and emerging models for integrating AI workloads across storage and compute. By applying deep knowledge of infrastructure technologies with proven skills in positioning, content strategy, and thought leadership, Don helps vendors sharpen their story, differentiate their offerings, and achieve stronger competitive standing across business, media, and technical audiences.

Stephanie Walter | Practice Leader - AI Stack

Stephanie Walter is a results-driven technology executive and analyst in residence with over 20 years leading innovation in Cloud, SaaS, Middleware, Data, and AI. She has guided product life cycles from concept to go-to-market in both senior roles at IBM and fractional executive capacities, blending engineering expertise with business strategy and market insights. From software engineering and architecture to executive product management, Stephanie has driven large-scale transformations, developed technical talent, and solved complex challenges across startup, growth-stage, and enterprise environments.