Research Notes

Palo Alto Networks Portkey Buy Exposes Real-World AI Gateway Friction

Prisma AIRS embeds an AI Gateway control plane to address runtime policy drift, token bill shock, and unmanaged agentic traffic across fragmented enterprise AI environments.

Can Intel’s Platform Argument Outlast NVIDIA’s Network Effect?

Xeon 6+ on 18A, AET energy telemetry, and an air-cooled inference GPU define Intel’s Intelligence Center bet at Computex 2026.

Compute Continuum: Why Qualcomm Opened Computex With a Vision Not a Pitch

Cristiano Amon described the era of agents, and redefined how we will interact with everything Qualcomm powers

HPE’s Hyper-Integrated Inflection: Fiscal Acceleration, Architectural Shifts, and Long-Term Execution Risks in the AI Era

Explosive AI demand and Juniper synergies appear to pull long-term guidance forward, but component costs and lumpy sovereign deals loom ahead.

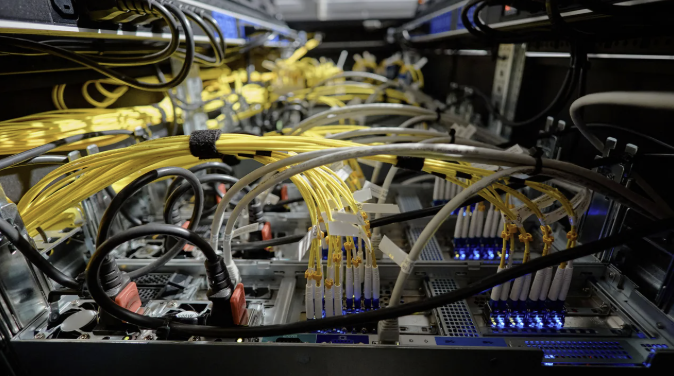

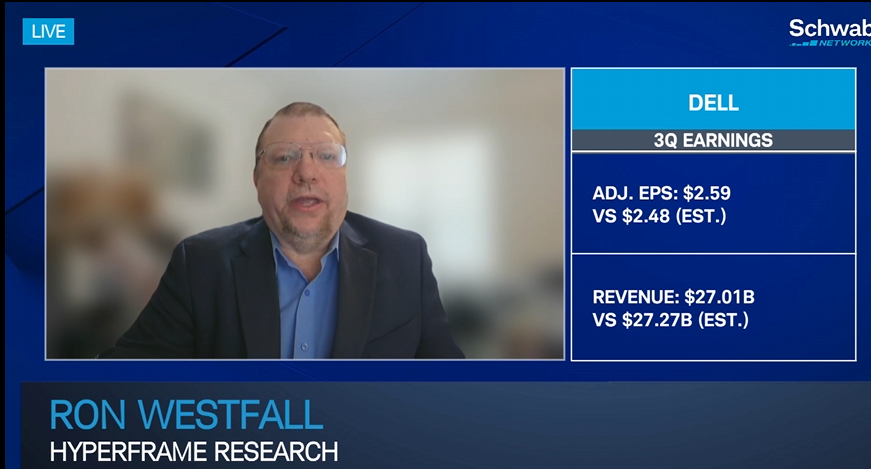

Is the Standard Server Rack Dead for Agentic AI?

Dell delivers the first liquid-cooled NVIDIA Vera Rubin rack to CoreWeave, deploying custom Arm-based Vera CPUs to scale autonomous agent setup fully.

Anthropic IPO Filing Tests the Enterprise Value of AI Platforms

Anthropic’s confidential S-1 filing, recent Series H funding, and Claude Opus 4.8 release shift the focus from model performance to operational scale, developer adoption, and governed enterprise deployment.

Cisco Is On Fire Right Now – A Strategy Breakdown

Cisco Live provides a unique opportunity to dive deep into how the company is making strategic choices and what we can expect.

How Does VAST Data Help Mistral Evolve from a Model Provider Into a Sovereign AI Platform?

Mistral’s deployment of the VAST Data Platform alongside NVIDIA GB300-powered AI factories supports the company’s effort to build AI cloud services, enterprise AI capabilities, and sovereign AI infrastructure across Europe.

Delta Airlines Amazon Leo Bet Just Got More Expensive

New Glenn’s LC-36 explosion is a launch cadence problem for Amazon Leo & customers like Delta Airlines, but the satellite math is harder than the headline suggests, and the competitive narrative is setting fast.

Is IBM Handing a Multi-Billion Dollar Subsidy to the Open Source Commons?

IBM and Red Hat launch an ambitious AI-powered clearinghouse aimed to protect the global open source software layer from advanced algorithmic exploits.

Research

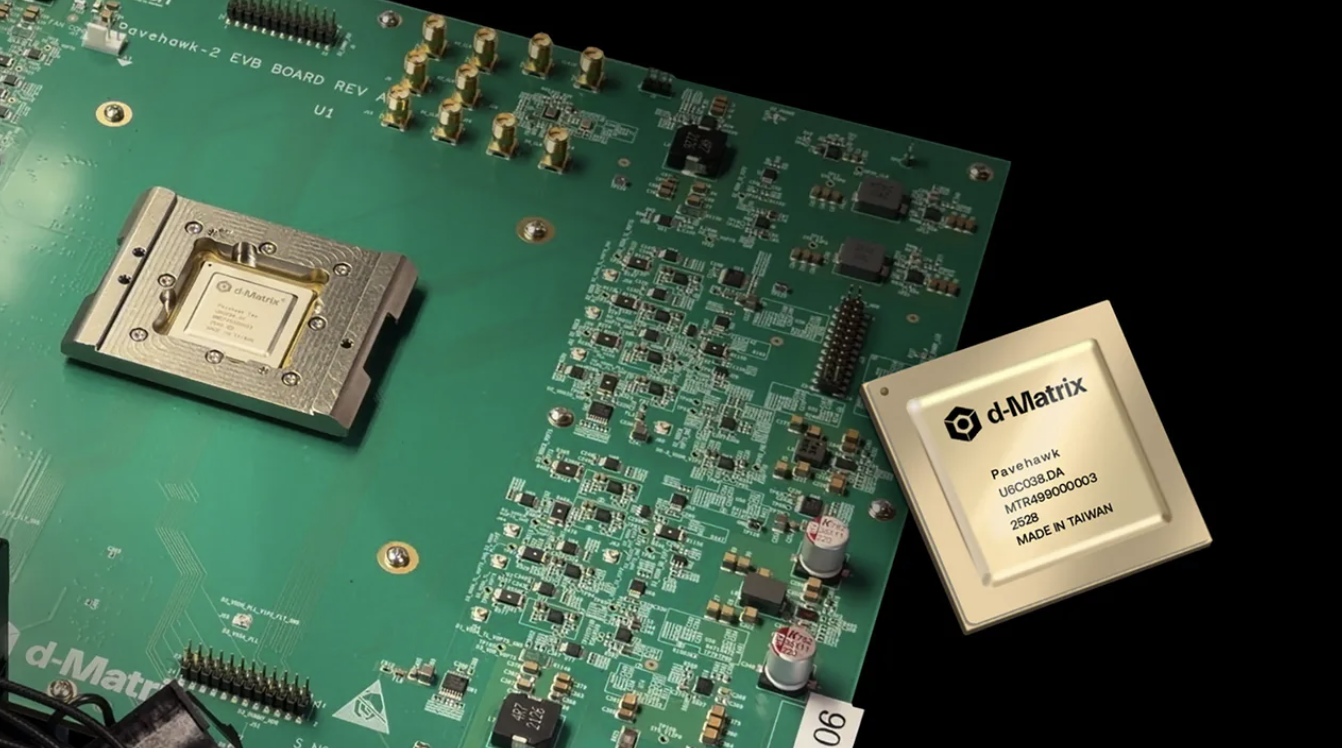

Accelerating Chip Prototype-to-Product

How MIPS and GlobalFoundries Are Compressing the FPGA-to-ASIC Path

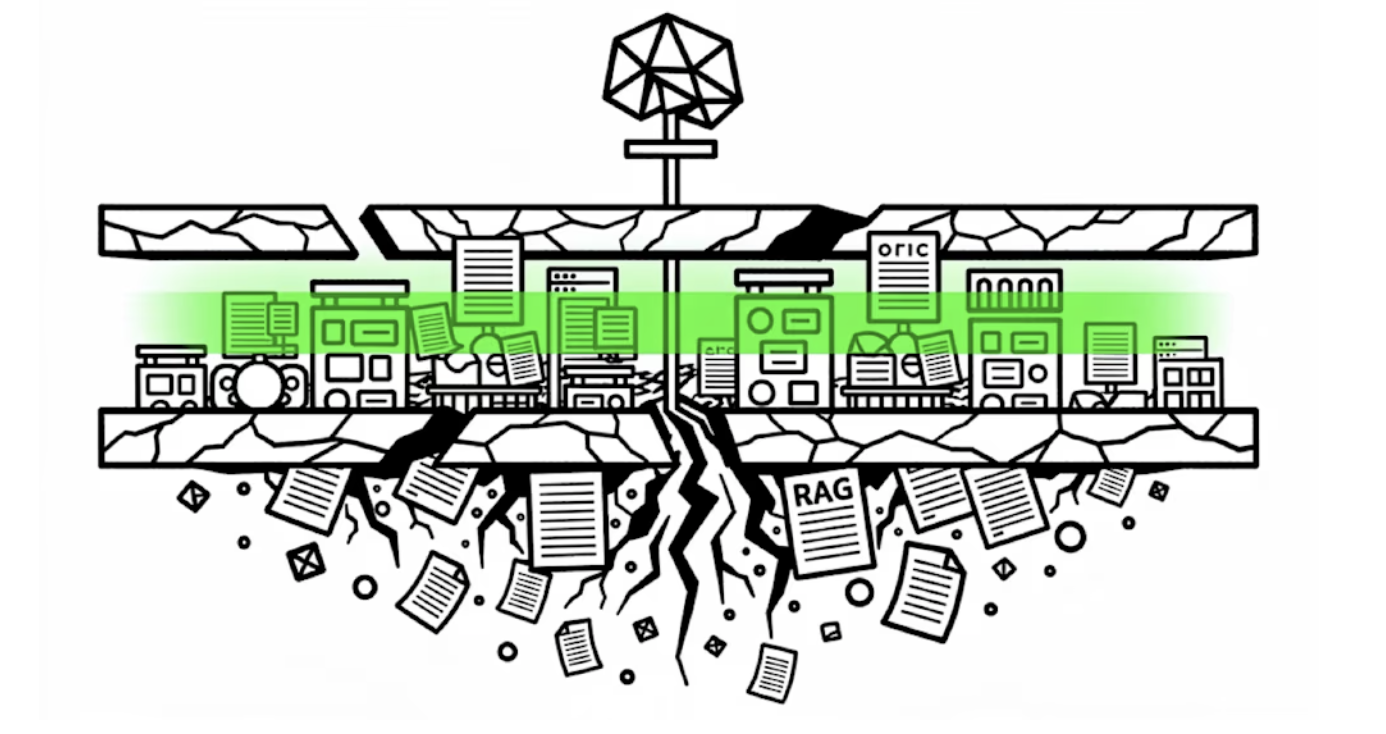

Retrieval to Reasoning: Why Agent Builders Are Really Context Engines

How Context Engineering Separates Agent Demos from Production Systems

Closing the Observability Gap for Today’s Distributed Applications

Why Internet Performance Monitoring Is Essential for Modern Applications

Choosing the Right Terminal Emulator: A Buyer’s Guide for Modern Host Access

Choosing the Right Terminal Emulator: A Buyer’s Guide for Modern Host Access

Latest Research

Research

Accelerating Chip Prototype-to-Product

How MIPS and GlobalFoundries Are Compressing the FPGA-to-ASIC Path

Retrieval to Reasoning: Why Agent Builders Are Really Context Engines

How Context Engineering Separates Agent Demos from Production Systems

Closing the Observability Gap for Today’s Distributed Applications

Why Internet Performance Monitoring Is Essential for Modern Applications

Choosing the Right Terminal Emulator: A Buyer’s Guide for Modern Host Access

Choosing the Right Terminal Emulator: A Buyer’s Guide for Modern Host Access